AI Chatbots for Health Advice: What Can Go Wrong and the Precautions You Must Take

As AI tools like OpenAI’s ChatGPT and Google Gemini become part of everyday life, many people turn to them for quick answers about symptoms, diets, supplements, and wellness trends. People seek answers and are depending more on AI to get answers! While AI chatbots can be helpful for general education, they are not a replacement for professional medical care. Before asking for an AI chatbot for health advice, it is important to understand both the benefits and the limitations.

Here is what you should know and the precautions you should consider.

1. AI is informational, not diagnostic:

AI chatbots provide general information based on patterns in data. They do not examine you, run lab tests, or understand your complete medical history. If you describe symptoms, the chatbot may suggest probable causes — but it cannot diagnose conditions.

Health is complex. The same symptom can have many causes, ranging from minor to serious. Chest pain, fatigue, headaches, or digestive issues all require context that AI cannot fully assess.

Precaution: Use AI for background information only. Always consult a licensed healthcare professional for diagnosis, testing, and treatment decisions.

2. Information may be incomplete or outdated:

Medical guidelines evolve constantly. New research updates recommendations for blood pressure, cholesterol, diabetes, hormone therapy, and more. AI systems are trained on large datasets but may not always reflect the latest clinical guidelines or region-specific recommendations.

In addition, health advice often depends on age, sex, medical history, medications, pregnancy status, and other individual factors.

Precaution: Verify important medical information through trusted sources such as your physician, registered dietitian, pharmacist, or reputable health organizations.

3. AI doesn’t know your full health history:

Even if you provide detailed information, an AI chatbot cannot access your full medical record. It doesn’t know about previous diagnoses, imaging results, family history, or subtle risk factors unless you explicitly mention them — and even then, it may not interpret them correctly.

For example, supplement advice that is generally safe could be risky if you have kidney disease, are on blood thinners, or have autoimmune conditions.

Precaution: Never start, stop, or change medications or supplements based solely on AI advice.

4. Emergency situations require immediate care:

AI chatbots are not emergency responders. If you are experiencing severe symptoms — such as chest pain, shortness of breath, signs of stroke, severe allergic reactions, or suicidal thoughts — immediate medical attention is critical.

Precaution: In emergencies, call emergency number or your local emergency number. Do not rely on AI for urgent decision-making.

5. Be careful with personal health data:

When using AI tools, remember that you are sharing sensitive information. While companies such as OpenAI implement privacy protections, no online system is entirely risk-free.

Precaution: Avoid sharing sensitive details such as full medical record numbers, insurance information, home address, or identifiable personal data unless you understand the platform’s privacy policies.

6. Ai can be helpful for education and preparation:

Despite limitations, AI can be a useful tool when used wisely. It can help you:

- Understand medical terminology before a doctor’s appointment.

- Create a list of questions to ask your provider.

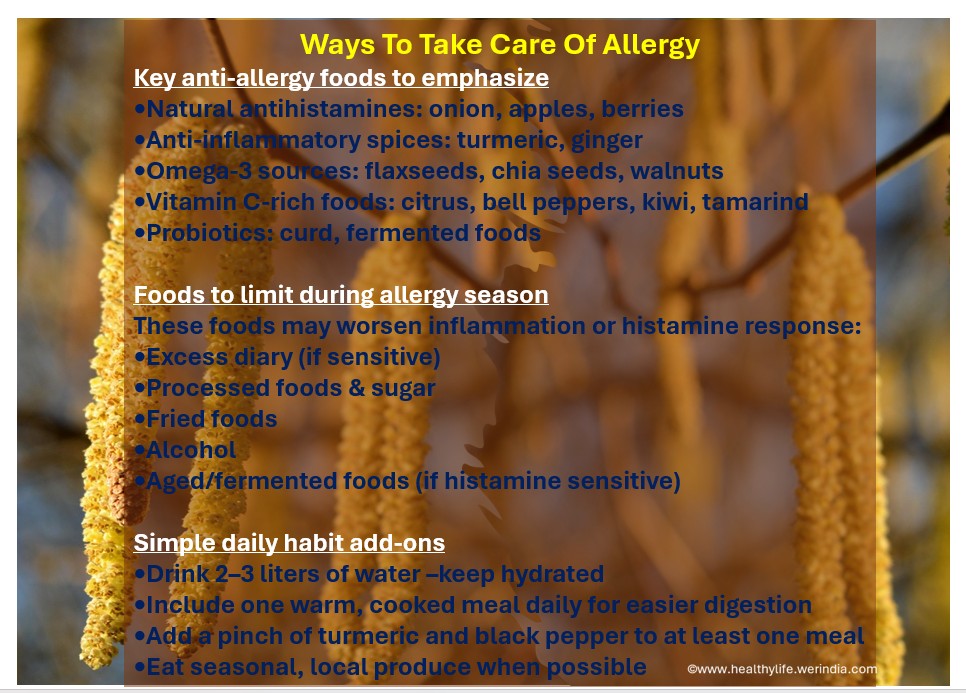

- Learn about general nutrition principles.

- Compare lifestyle strategies for stress, sleep, or exercise.

- Interpret basic lab values at a high level.

For example, you might ask for general information about anti-inflammatory diets, blood pressure–friendly foods, or stress management techniques. Once you find the information on AI tool further you can discuss those ideas with your healthcare provider. For best practice use AI as a starting point, not the final authority.

7. Watch for overconfidence in answers:

AI responses can sound confident and polished — even when the information is uncertain. This tone may create a false sense of accuracy.

Precaution: If advice sounds extreme, overly simplified, or “too good to be true,” double-check it. Be especially cautious with claims about miracle cures, rapid weight loss, hormone balancing shortcuts, or detox regimens.

8. Mental health conversations need care:

AI can provide coping strategies and general information about anxiety, stress, or depression. However, it cannot replace therapy or crisis intervention.

Precaution: If you are struggling emotionally, consider speaking with a licensed mental health professional. AI can supplement but not replace the human support.

AI chatbots are powerful educational tools. They can increase health literacy, clarify confusing topics, and help you prepare for medical appointments. However, they are not doctors, and they cannot personalize care in the way a trained healthcare professional can. The safest approach is to treat AI as a knowledgeable assistant — not a medical authority. Ask questions, stay curious, verify information, and involve qualified healthcare providers in all important health decisions.

Used responsibly, AI can empower you. Used carelessly, it can mislead you. Your health deserves careful, human-guided care.

References:

- www.werindia.com

- www.cnn.com

- https://www.hyro.ai/

- DoxGPT: Your Free, HIPAA-Compliant Workflow Assistant

- 5 things you should consider before asking an AI chatbot for health advice | PBS News

- Image credit: Images created using AI Copilot and AI Gemini respectively on 3-4-2026

Author: Sumana Rao | Posted on: March 5, 2026

« New study links gut microbiota strains with more severe strokes and poorer post-stroke recovery What Is Digital Detoxification And Dopamine Detoxification? »

Write a comment